YouTube made an important announcement two weeks ago regarding how they are changing recommendations. In summary, YouTube is trying to protect us from our own cognitive biases. Unfortunately, humans are more likely to click on things that are sensational, negative, or fear-inducing.

To be fair, this didn’t start with the internet. For many years, the adage of news programs has been “if it bleeds, it leads.” Basically, news stories get better ratings talking about murder than about decreased childhood mortality.

While cognitive biases have been around as long as humans have, the internet has undoubtedly made them worse. Our biases can now be taken advantage of more efficiently than ever before. The Four—a book about Amazon, Apple, Facebook, and Google—described this phenomenon with an example of how Facebook chooses the political stories it shows its users:

Moderates are hard to engage or predict… So, Facebook, and the rest of the algorithm-driven media, barely bothers with moderates. Instead, if it figures out you lean Republican, it will feed you more Republican stuff, until you’re ready for the heavy hitters, the GOP outrage: Breitbart, talk radio clips. You may even get to Alex Jones. The true believers, whether from left or right, click on the bait. The posts that get the most clicks are confrontational and angry. And those clicks drive up a post’s hit rate, which raises its ranking in both Google and Facebook. That in turn draws even more clicks and shares… And we all step deeper into our bubbles. This is how these algorithms reinforce polarization in our society.

It’s easy to discard Alex Jones fans as nut jobs, but it’s important to realize that we are all susceptible to these same patterns. The more content we consume of something we believe in, the more content that supports those beliefs will get fed to us. And we’re more likely to engage in the most polarized of that content. Thus, those beliefs become more extreme over time.

YouTube, Facebook and many other websites have known this for years—they can easily see engagement patterns in their data. One could make a legitimate argument that these companies have knowingly taken advantage of cognitive biases to increase clicks and engagement. The other viewpoint is that by not censoring content, these companies are encouraging free speech and allowing us to engage in what we want to engage with. It’s not Facebook’s fault that we’re a bunch of gullible monkeys.

Now, YouTube and Facebook are starting to take a more proactive role in helping us not get in our own way. The most important part of YouTube’s announcement from two weeks ago read:

[We are] taking a closer look at how we can reduce the spread of content that comes close to—but doesn’t quite cross the line of—violating our Community Guidelines. To that end, we’ll begin reducing recommendations of borderline content and content that could misinform users in harmful ways—such as videos promoting a phony miracle cure for a serious illness, claiming the earth is flat, or making blatantly false claims about historic events like 9/11.

This sounds almost identical to the announcement that Mark Zuckerberg made in his November memo, A Blueprint for Content Governance and Enforcement:

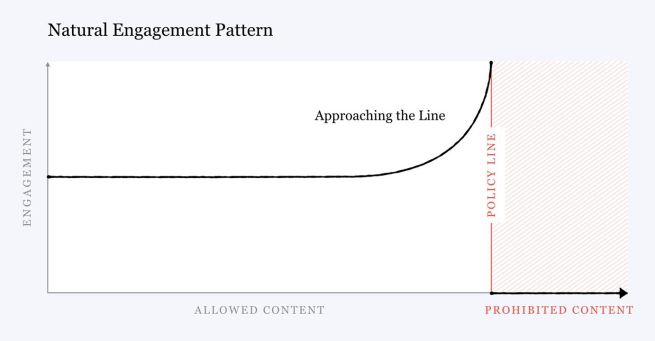

One of the biggest issues social networks face is that, when left unchecked, people will engage disproportionately with more sensationalist and provocative content… At scale it can undermine the quality of public discourse and lead to polarization. In our case, it can also degrade the quality of our services. Our research suggests that no matter where we draw the lines for what is allowed, as a piece of content gets close to that line, people will engage with it more on average — even when they tell us afterwards they don’t like the content.

In picture-form, the below is how Facebook sees engagement increase with borderline content:

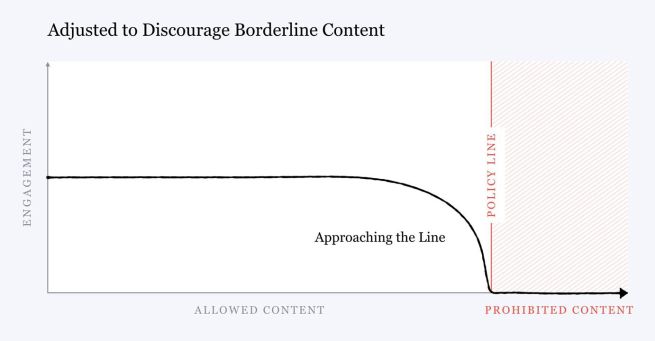

Going forward, Facebook is training its AI to detect borderline content and distribute it less. The hope is that our future engagement will look like this:

The risk here is that Facebook or YouTube take this too far. Very few people will complain about Alex Jones getting banned from social media sites, but the more content these sites subjectively censor, the more control they have over what the public sees. And while Facebook censoring content isn’t a perfect analogy, if you truly believe in freedom of speech, you have to believe in it for those who are diametrically opposed to your beliefs.

While the above viewpoint is valid, all media companies censor what we see. Fox News and MSNBC sure as hell don’t present both sides fairly and equally. YouTube and Facebook are walking a fine line with censorship and it could backfire, but I’m happy they’re trying. If they strike the right balance, I believe they’ll make the internet a better place. Less polarization can only be a good thing.